Reduction in data through a wise choice of data model (e.g.Reduction in data communications between cluster nodes through the right choice of distribution keys.Import from / export to S3 using compressed or column-based data formats (e.g.The other best practices have less of an impact: Instead of 10 years of history, only the chronology of the last month or days is processed, according to needs. Delta processes and sort keys are usually the biggest "levers", as they easily allow performance gains of 100 -1000 by significantly lowering data processing requirements. These development patterns are quite easy to consider and should always be included in data engineering. However, we do not recommend using this factor exclusively as a solution, experience having shown that the development patterns described next usually have a much greater influence on query performance and are much more cost-effective to implement: The ability to scale a database seems to solve these issues easily. Usage costs for servers, and not data volumes, are generally the cost drivers. 20h of ra3.xlplus or 8h 8 RPU of Redshift Serverless cost about as much as 1TB month. Storage of data in general is a secondary aspect, because separation (at least Serverless and new RA3 nodes) makes memory capacity more or less "unlimited" and only has a minor impact on the total costs of a Redshift.

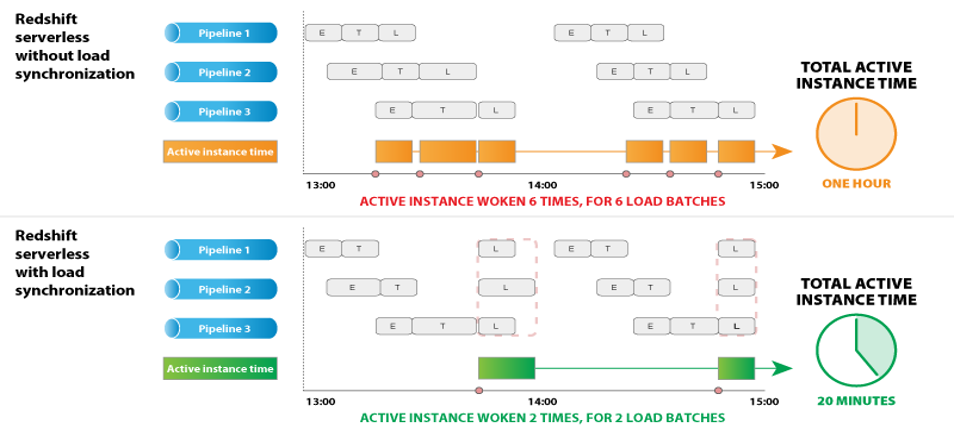

Ultimately, the sizing of a DWH is concluded precisely once all these requirements have been fulfilled. month-end closing statement) which are executed very rarely and should not affect sizing. Complexity of SQLs and user expectations in the areas of reporting and ad-hoc analytics: >95% of all queries should be within the range of seconds, while the remaining 5% (if they do not run constantly) may also take minutes.Factors influencing query performance for end-users / BI tools.This necessitates an understanding of data use cases (requirements), query patterns and changes in data volume over time in order to enable proactive adjustments where necessary. streaming processes, should also be able to run correspondingly fast to prevent data backlogs. Processes needed at intervals of minutes/seconds, i.e.The requirement here is to process the freshest possible data and keep reporting up-to-date. For hourly processing to make sense at all, the involved processes should run for just a few minutes each.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed